The vast majority of enterprises recognize the wisdom of powering their API tests with data.

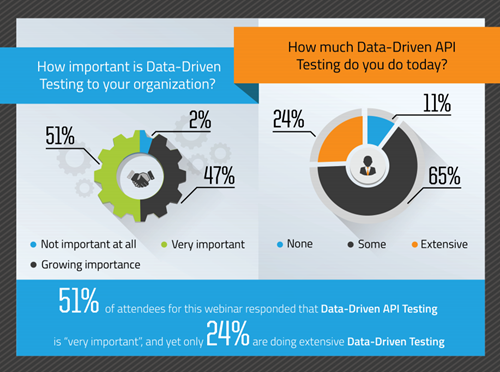

In a live webinar we did on the topic of data-driven API testing, attendees were polled on their perception of the importance of data-driven testing, along with how much of this testing strategy they actually employed in their own organization.

The results were telling.

Although over half of respondents indicated that data-driven API testing is a “very important” priority, merely 24% of the audience was employing this strategy extensively. This deficiency exposes APIs – and the business that relies on them – to plenty of risks.

Here are 3 reasons why your goals for testing aren’t becoming reality.

1. Business logic isn’t fully exercised

Although every API is unique, it’s common for inbound messages to incorporate dozens of parameters, each with its own range of potential values.

There are also uncountable would-be interactions among these parameters.

A handful of hard-coded test cases can’t possibly explore all of these permutations, meaning that only a fraction of the underlying application code gets evaluated.

The upshot is that the API appears to have higher quality than it really does. It’s even worse when you recall that business users are frequently separated from the QA team.

For example, consider what happens when a tester encounters a date field to be sent to an API. With no knowledge of the distinct input requirements for that field – and a lack of supporting software tooling to make it easy to supply a variety of values – the tester will likely just plug in a random entry.

The end result is that the business logic that was so carefully encoded in the API will not be properly tested, which guarantees hard-to-diagnose problems in production.

2. Latency isn’t accurately measured

An API is not an island: a complex, multilayered stack of technology – including database servers, web servers, application servers, and other infrastructure – join forces to bring it to life. Consequently, it’s imperative to consider the impact of your testing strategy on the entire API stack.

For example, if testers repeatedly feed the same minimal, static set of input values to the API, its responses will become cached and performance will appear to be blindingly fast – at least during testing.

This provides yet another false sense of security: in production, the API will suddenly start performing unpredictably, and may even seem to be plagued by hard-to-reproduce bugs.

3. Automation isn’t possible

Today, many software development organizations have adopted agile delivery methodologies.

Release cycles have shortened from weeks to hours, and the only credible way to keep up with this blistering pace is to apply automation – for builds as well as testing.

Unfortunately, a lack of data-driven testing means manually invoking APIs using a limited set of values coupled with time-consuming inspection of individual request/response messages.

These monotonous, error-prone maneuvers are diametrically opposed to what’s possible using automation.

Luckily, the quality, robustness, and affordability of modern API testing platforms such as Ready API have made datadriven testing possible for every organization: there’s no longer a need – or justification – for a tester to painstakingly type in API-bound data and then eyeball the results to confirm their accuracy.

We want to help you gap the space between your goals and reality, which is why ReadyAPI, part of the suite of tools from Ready API, makes sure that you can deliver accurate, fast, safe web services on time. For REST, SOAP and other popular API and IoT protocols, ReadyAPI provides the industry's most comprehensive and easy-to-learn functional testing capabilities. Based on open core technology proven by millions of community members, ReadyAPI helps you ensure that your APIs perform as intended, meet your business requirements, timeframes, and team skill sets right from day one.

Read Next: